|

8/30/2019 Airtel Parallel Ringing Activation

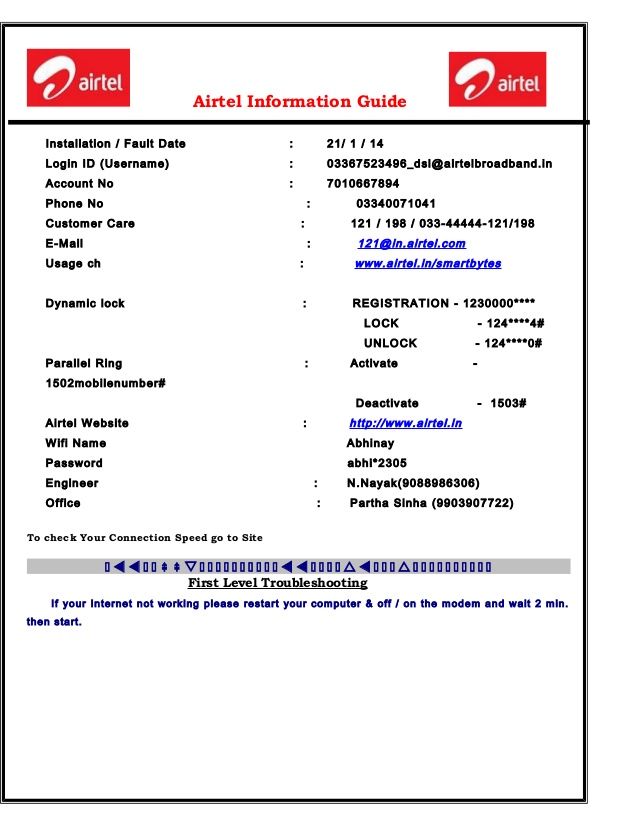

Customer Care Number 44444198 / 01 Registered Office of Airtel Landline Chennai Airtel Landline is also known by fixed line that is an innovative service and product of Airtel Telecommunication Company. Customers can get interacted with head office for multiple services and business deals can visit at: ➤ Bharti Airtel Limited (A Bharti Enterprise) Bharti Crescent, 1 Nelson Mandela Road, Vasant Kunj, Phase II, New Delhi - 110 070 Tel. No.: + 6100 Fax No.: + 6411 Airtel Landline Chennai Complaint Numbers Customers of Airtel Landline Chennai can call at below helpline numbers for making queries and complaints against services and many more. Toll free services of Airtel Landline Chennai is available on 24 hours without any charge. To FInd Airtel Landline Chennai Official Address The Official Address of Airtel Landline Chennai is Chennai, India.

Contact Customer Care Airtel Landline Chennai Via Email Address Send Email at ( [email protected]) To Airtel Landline Chennai. Need More Information of Airtel Landline Chennai Customer Service Through Website The Website of Airtel Landline Chennai is www.airtel.in. Airtel Landline Chennai Customer Care Helpline / Complaint Number Airtel Landline Chennai customer care contact number is. Airtel Landline Chennai Toll Free Customer Service Enquiry Number The contact number Airtel Landline Chennai is not guaranteed to be a toll free customer service helpline/enquiry number and 24X7 hours availability.

Set up call diversion To divert when there is no answer. Type the following into your phone.61. followed by your desired telephone number then add. For example.0191.

Add how many seconds (in 5 second increments) you want your phone to ring for before diverting (min 05, max 30). Add #.

Press the 'call' button (wait for the screen message to confirm the service has been set up). To cancel, call ##61# (again, wait for the screen message to confirm the service has been set up) To divert when you are unreachable (e.g.

A frequent question from customers is ‘How can I change the activation timing?’. The short answer is that you cannot, this is hard coded in the SQL Server engine and cannot be customized. But the reason why most people want the timing changed is to start all the configured maxqueuereaders at once. I will show you an unorthodox trick that can be used to achieve this result. Normally the activation mechanism monitors the queues and the RECEIVEs occurring and decides when is appropriate to launch a new instance of the activated procedure. However there is another, less known, side of activation: the event notification.

Those of you familiar with the for sure have learned about these events. This works similarly to the classical activated procedure, but instead of launching an instance of the activated procedure, a notification is sent on the subscribed service. The key difference is that there is no restrictions on how many different notification subscriptions can be created for the same QUEUEACTIVATION event! And when there is the time to activate, all the subscribers are notified. These notifications are ultimately ordinary Service Broker messages sent to the subscribed service. These subscribed services are running on queues that, obviously, can have attached procedures to be activated.

See where I am going? You can use the subscribed service’s queue activation to launch a separate procedure per subscribed service for each original queue activation notification. So if you create 5 QUEUEACTIVATION subscriptions from 5 separate services, you will launch 5 procedures (nearly) simultaneously!

If your tape library has multiple drives, you can use drives simultaneously for writing data to tape. This option is useful if you have a lot of tape jobs running at the same time or you have a lot of data that must be written to tape in a limited backup window. To enable the tape parallel processing, configure options of media pool. In the media pool setting, set a maximum number of drives that can be used for simultaneous data processing. For details, see.

For parallel processing, you can utilize multiple drives of one tape device. Note: You cannot enable parallel processing for GFS media pools.

To process the tape data parallelly, you can split the data across drives in 2 ways:.

Parallel FFTW Go to the FFTW home page. Parallel Versions of FFTW Starting with FFTW 1.1, we are including parallel Fourier transform subroutines in the FFTW distribution. Parallel computation is a very important issue for many users, but few (no?) parallel FFT codes are publicly available. We hope that we can begin to fill this gap. We are currently experimenting with several parallel versions of FFTW. These have not yet been optimized nearly as fully as FFTW itself, nor have they been benchmarked against other parallel FFT software (mainly because parallel FFT codes are few and far in between).

They are, however, a start in the right direction. Parallel versions of FFTW exist for:.: Cilk is a superset of C developed at MIT. It allows easy parallelization of C code and automates load balancing between processors. It is currently only available on shared memory SMP architectures. The Cilk FFTW routines parallelize both one and multi-dimensional transforms, and are included in. Threads: Threads are a low-level mechanism for managing concurrent computations that share a single address space. They are available in some form on most SMP systems.

FFTW currently supports Solaris, POSIX, and threads, and should be easy to port to other systems. (Some experimental code is in place for MacOS MP and Win32 threads.) The threads FFTW routines parallelize both one and multi-dimensional transforms, and are included in.: MPI is a standard message-passing library available on many platforms. Currently, we have in-place, multi-dimensional parallel transforms for FFTW that use MPI. These routines differ from the threads and Cilk versions of FFTW in that they work on distributed memory machines in addition to shared-memory architectures.

The MPI FFTW routines are included in. Benchmarks of the parallel FFTs We have done a small amount of benchmarking of the parallel versions of FFTW.

Airtel Parallel Ringing

Unfortunately, there isn't much to benchmark them against except for each other-we have had some difficulty in finding other, publicly available, parallel FFT software. On the Cray T3D, however, we were able to compare Cray's PCCFFT3D routine to FFTW. Benchmark results are available for the following machines:. (167 MHz Sun UltraSPARC-I). (Shared memory SMP.).

(150 MHz DEC Alphas) at the. (Distributed memory.) How the parallel FFTW transforms work The following is a very brief overview of the methods with which we currently parallelize FFTW. One-dimensional Parallel FFT The Cooley-Tukey algorithm that FFTW uses to perform one-dimensional transforms works by breaking a problem of size N=N 1N 2 into N 2 problems of size N 1 and N 1 problems of size N 2.

Airtel Activation Code

The way in which we parallelize this transform, then, is simply to divide these sub-problems equally among different threads. In Cilk, this amounts to little more than adding spawn keywords in front of subroutine calls (thanks to FFTW's explicitly recursive structure). Currently, we keep the data in the same order as we do for the uniprocessor code; in the future, we may reorder the data at each phase of the computation to achieve better segregation of the data between processors.

Wild C A T S Episodio 13 Dublado. By Desenhos Z on In Video Dungeons. 26 Feb Filme Oleo De Lorenzo Completo Dublado Download are trademarks of. 1 Baixar filme oleo de lorenzo completo dublado via torrent >>> Baixar filme oleo de lorenzo completo dublado via torrent Baixar filme oleo de lorenzo completo. Filme oleo de lorenzo completo dublado download.

Multi-dimensional Parallel FFT (shared memory) Multi-dimensional transforms consist of many one-dimensional transforms along the rows, columns, etcetera of a matrix. For the Cilk and threads versions of FFTW, we simply divide these one-dimensional transforms equally between the processors.

Again, we leave the data in the same order as it is in the uniprocessor case. Unfortunately, this means that the arrays being transformed by different processors are interleaved in memory, resulting in more memory contention than is desirable. We are investigating ways to alleviate this problem. Multi-dimensional Parallel FFT (distributed memory) The MPI code works differently, since it is designed for distributed memory systems-here, each processor has its own memory or address space in which it holds a portion of the data. The data on a processor is inaccessible to other processors except by explicit message passing. In the MPI version of FFTW, we assume that a multi-dimensional array is distributed across the rows (the first dimension) of the data. To perform the FFT of this data, each processor first transforms all the dimensions of the data that are completely local to it (e.g.

Then, the processors have to perform a transpose of the data in order to get the remaining dimension (the columns) local to a processor. This dimension is then Fourier transformed, and the data is (optionally) transposed back to its original order. The hardest part of the multi-dimensional, distributed FFT is the transpose. A transpose is one of those operations that sounds simple, but is very tricky to implement in practice.

The real subtlety comes in when you want to do a transpose in place and with minimal communications. Essentially, the way we do this is to do an in-place transpose of the data local to each processor (itself a non-trivial problem), exchange blocks of data with every other processor, and then complete the transpose by a further (precomputed) permutation on the data. All of this involves an appalling amount of bookkeeping, especially when the array dimensions aren't divisible by the number of processors. The communications phase is an example of a complete exchange: an operation in which every processor sends a different message to every other processor. (This is probably the worst thing that you can do to a memory architecture.) The whole process would be simpler, and possibly faster, if the transpose weren't required to be in-place. We felt, however, that if a problem is large enough to require a distributed memory multiprocessor, it is probably large enough that the capability to perform a transform in-place is essential. Go to the FFTW home page.

Comments are closed.

|

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed